Launches that Learn

Chapter 5: The Launch Is No Longer a Moment — It’s a Self‑Learning Model

For years, launches have been treated like theater.

A date. A deck. A cascade of emails. A spike in activity followed by silence.

Then, inevitably, the post‑mortem:

“The market didn’t get it.”

“Sales didn’t use the messaging.”

“We’ll fix it in the next launch.”

That mental model is broken.

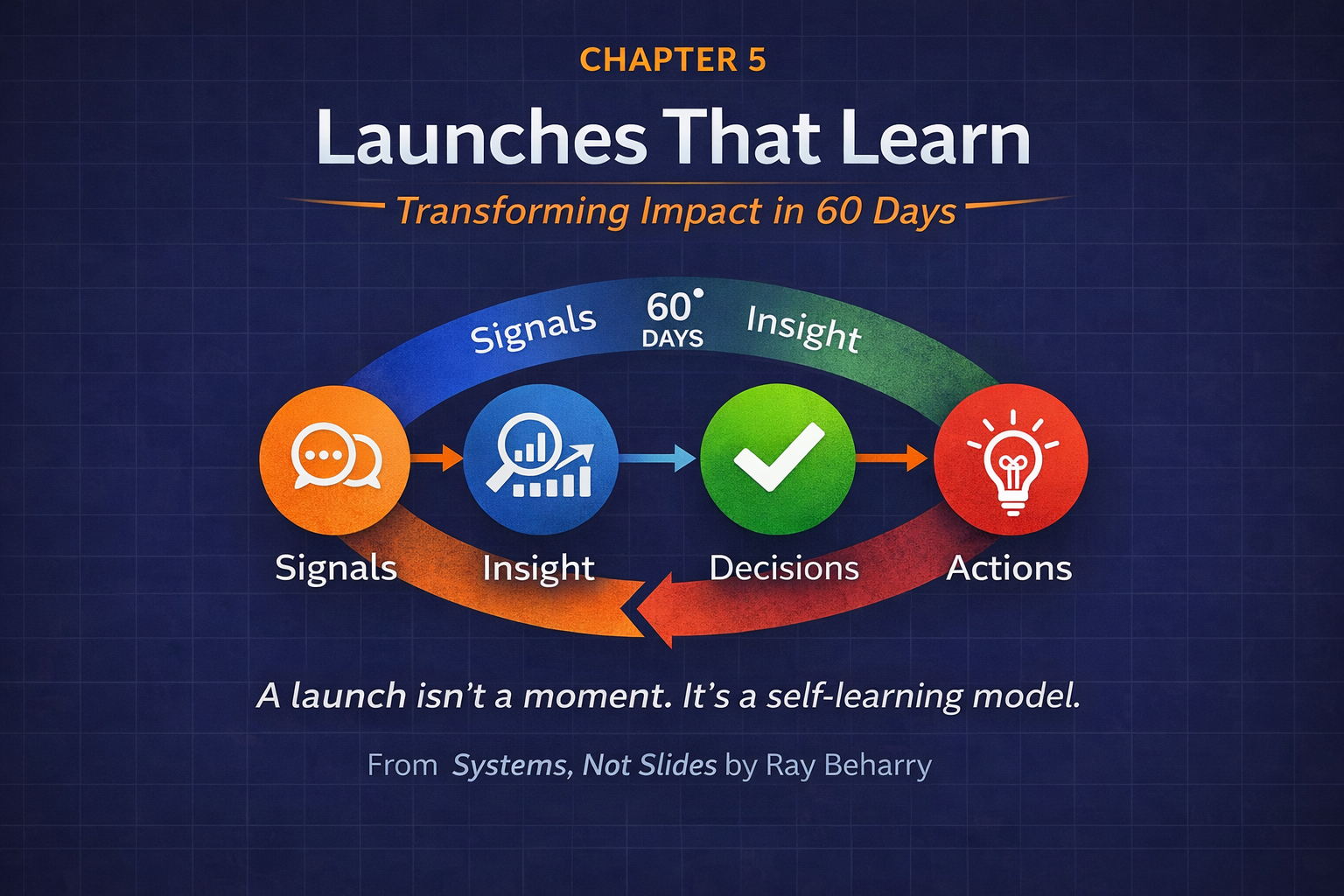

In modern product marketing, a launch is not an event — it’s a system. And like any good system, it should sense, learn, and adapt in real time.

If you remember one thing from this chapter, let it be this:

A launch should behave like telemetry, not theater.

Why the Old Launch Model Fails

Most launches fail quietly.

Not because the product is bad — but because learning stops too soon.

The traditional launch model assumes:

- Messaging is mostly “right” at GA

- Feedback arrives too late to matter

- Learning happens in quarterly reviews

But buyers don’t evaluate products in quarters. They evaluate them in conversations, clicks, objections, and moments of confusion.

If you’re not capturing those signals — and reacting to them — you’re flying blind.

The Reframe: Treat Your Launch Like a Self‑Learning Model

Instead of asking:

“Did the launch work?”

Ask:

“What did the market teach us this week?”

That shift changes everything.

A modern launch should:

- Make explicit narrative bets

- Be instrumented across every surface

- Run on a deliberate learning cadence

- Close the loop between marketing, sales, and product

- Produce institutional knowledge — not tribal memory

Let’s break that system down.

Step 1: Declare Your Narrative Bets

Every launch is a hypothesis.

The mistake teams make is pretending it’s not.

Before launch, force clarity by declaring three narrative bets — no more.

Examples:

- Trust: Buyers care most about credibility, governance, and proof

- Speed: Buyers value time‑to‑value and ease of adoption

- Control: Buyers want flexibility, extensibility, and autonomy

These are not taglines. They are beliefs about buyer psychology.

By naming them explicitly, you create something powerful:

- A shared mental model

- A way to measure resonance

- Permission to change your mind

If you don’t declare your bets, you can’t learn which ones are wrong.

Step 2: Instrument Every Surface

Most teams instrument funnels.

Very few instrument narratives.

A self‑learning launch tags everything:

- Web pages

- Emails

- SDR talk tracks

- Sales decks

- Demos

Each asset should carry simple metadata:

- Narrative (Trust / Speed / Control)

- Persona (CIO, Data Leader, Ops, etc.)

- Stage (Discovery, Evaluation, Validation)

This isn’t busywork.

It allows you to answer questions like:

- Which narrative drives deeper engagement?

- Where does confusion spike?

- Which personas are reacting — and which are stalling?

You can’t optimize what you don’t label.

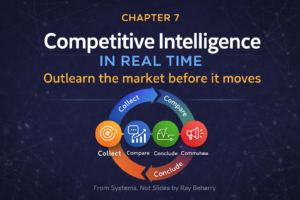

Step 3: Run the 60‑Day Launch Rhythm

Learning needs a cadence.

One of the most effective systems I’ve used is a 60‑day launch rhythm:

- Day −30: Prep

Finalize narrative bets, instrumentation, and success signals - Day 7: First Read

Early signal scan — what’s landing, what’s not - Day 14: Pivot the Weakest Bet

Don’t polish the strongest message — fix the failing one - Day 30: Consolidate

Double down on what’s working, retire noise - Day 60: Evolve or Kill

Decide what becomes core messaging vs. what gets sunset

This rhythm forces discipline.

It replaces gut feel with structured learning.

Step 4: Close the Field Loop — Weekly

Your best launch data isn’t in dashboards.

It’s in conversations.

Tools like Gong, Chorus, or call transcripts are gold — if you review them intentionally.

A simple weekly loop:

- Pull 10–15 calls

- Look for narrative language buyers repeat (or reject)

- Identify new objections or reframes

- Adjust talk tracks immediately

Not quarterly. Weekly.

This is how launches stay alive.

Step 5: Publish the Launch Learnings Memo

Most teams learn — then forget.

Institutional learning requires ritual.

At the end of each launch cycle, publish a simple memo:

What we believed

What changed

What we’re testing next

This does three things:

- Builds organizational memory

- Signals that learning is success

- Makes future launches exponentially smarter

Over time, these memos become a competitive asset.

What This Means for Leaders

If you’re leading product marketing, growth, or go‑to‑market, the question isn’t:

“Did we launch on time?”

It’s:

“Did we build a system that gets smarter?”

Because markets don’t reward perfect launches.

They reward teams that learn faster than their competitors.

And that’s what Systems, Not Slides is really about.

Chapter 5 dives deeper into how to operationalize learning loops across launches, campaigns, and portfolios — turning go‑to‑market into a compounding advantage, not a recurring fire drill.